Atiz in the news – The Digital Book Drive’s Left-Behinds

April 24, 2009 on 2:32 pm | In General, News | No CommentsThe Digital Book Drive’s Left-Behinds

By Jack M. Germain

TechNewsWorld

04/22/09 4:00 AM PT

The quest to turn the pages of every last book on the planet into data — redundantly stored and universally accessible — sounds noble enough. However, technological issues stand in the way. Not the least of them is the problem of deciding on a universal format for the world’s digital library.

The older bits of the world’s accumulated knowledge, bound together in volumes of printed books and magazines, are slowly disappearing. Out-of-print renditions often disappear forever. Libraries with limited shelf space often replace seldom-used titles with newer tomes. A far smaller portion of printed matter makes it to page-scanning processes for preservation in digital form.

In the race to build a universal digital library, many important books and documents are being left behind: special edition books, religious books, historical documents, and books found in small local libraries or in private collections. Left undigitized, the information inside them will fade as the paper deteriorates.

Despite the best efforts of organizations intent on creating exhaustive digital libraries of all human knowledge, their projects are still too fragmented to produce a reliable, universal, digital repository of all printed goods.

Often, corporate decisions and budgetary considerations mean books are left behind.

Google’s (Nasdaq: GOOG) Book Project and Project Gutenberg are two of the more well-known efforts to convert the printed page to a digitally viewable form. Usually, large libraries and university research directors form alliances to take on the challenge of digitizing their own collections. Their ‘leave no book behind’ mentality is filtering down to smaller businesses with limited revenue, driven by improvements in scanning and storage technologies. This is creating a balance of power, so to speak, that allows those without the reach and capital of Google to join in the digitization movement.

“People are doing this with scanners of all kinds. Hardware is getting cheaper and better. Nowadays, a lot of it is done with digital cameras. They have high enough resolution today to give very good results. It’s almost like going back to the microfiche days,” John Sarnowski, director of The ResCarta Foundation and director of Imaging Products for Northern Micrographics, told TechNewsWorld.

Flawed System

Northern Micrographics is a service bureau that converts paper and film into electronic format. The company has been digitizing printed pages since the early 1980s. In that time span, Sarnowski has observed a big misconception about how the process works. Contrary to popular belief, the job of converting from physical page to digital screen does not end with the scanning or camera image.

“Shooting the pages is only one-fifth of the job,” he explained. “There is a lot of technology built around getting the page numbers and the physical layout to match the original printing. The rest of the job involves getting the metadata right.”

That involves a detailed process of making the pages match. For instance, you cannot have all the pages in digital form listed in the obscure number that the scanned file or camera image usually generates, such as 00001.scn. Page inserts, titles, author, and other data have to be coordinated in the finished digital product.

That’s been the problem since day one, and it’s what the technology has to overcome, according to Sarnowski.

Your Way or Mine

Another part of the problem in digitizing printed books into electronic media is the end-user format. There is no standard protocol for viewing digitized conversions so that anybody with access can read them.

For example, in 1994, Sarnowski’s company got involved with Cornell University and the University of Michigan on one of the earliest digital conversion projects in the United States. Ocular Character Recognition (OCR) initially cost US$14 per page, but as the technology got better, the cost dropped. At the end of the three-and-a-half-year project, it was down to a few cents per page.

When the company asked school officials how they wanted the data back, the officials responded, “How would you like to send it back?” said Sarnowski.

“There were no standards then. There still aren’t. The library people at Cornell didn’t know how to extract the data out of their database system so we could integrate the digital pages. We had to work out all of those details,” he explained.

Web No Solution

The Internet is not a true solution to providing universal access to a digital book library, either. Standardization does not always exist on the Web.

To see the problem, think of the digitizing process in terms of other technology. For instance, you can put a sound file in an MP3 player anywhere and it works, as there is only one standard. Not so with DVDs. Different parts of the world have regional codecs with their own file formats for video.

The same lack of universal standards plagues those working to create a universal digital book library.

“The big problem at every major research center, including Google, is there is no standard for dealing with digital pages. To this day, we still do not how how Google is storing the book data and what their format is,” Sarnowski said.

Starting From Digital

Some publishers start out in the digital form, so printed books do not have to be converted. While this approach does not solve the problem of saving books left behind, it at least does not add to that problem.

In the case of publishers such as Springer Publishing, authors must now submit their manuscripts in Microsoft (Nasdaq: MSFT) Word or a similar software file format. The company publishes all of its collections in both PDF (Portable Document Format) and XML (Extensible Markup Language).

“We did digitize all of our journal collections all the way back to the 1840s. We sent the physical pages to a vendor who made them available digitally through a scanning process. Somebody was inserting the metadata during that process,” George Scotti, global marketing director at Springer, told TechNewsWorld.

Saving Specialties

Singer Publishing does not worry about intellectual property theft involving its easy-to-get digital library offerings. The collection is not mainstream reading. Still, it is available on Amazon’s (Nasdaq: AMZN) Kindle e-book reader and other such devices.

Singer specializes in publishing scientific research. Since researchers already do most of their work online, the company’s customers are usually familiar with the electronic format, according to Scotti.

“We have a very liberal DRM (digital rights management) policy. Once you buy the content, you can do whatever you want with it. We’ve only had a few cases where it was a problem putting it on a Web site. But it’s not causing us a great deal of concern,” Scotti said.

Everybody Else

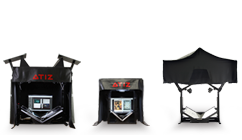

Another solution in the digital mix, offered by Atiz.com, could be ideal for small companies and individual authors who want to preserve their printed pages digitally.

As long as the user owns the copyright, there is no legal entanglement, according to Atiz President Nick Warmock. The company’s biggest customers include academic libraries around the world, municipalities for deed registries, students and service bureaus.

Three of Atiz’s products give consumers and small organizations an inexpensive device to make their own decisions on what to preserve digitally rather than going through outside services like Gutenberg and Google. In 2006, Warmock partnered with an associate who invented a way to have a mechanical arm turn the pages of books being scanned. The resulting BookDriveDIY (Do It Yourself) includes the cameras, mechanical setup and proprietary software. A related product released in 2007, BookSnap, targets students and others who want to digitize reams of notes. Atiz released BookDrive Pro in January of this year. The product prices range from $1,595 to $15,000.

“We envision one day having a searchable repository for all digitized content. But that hasn’t been worked out yet. The power of such a universal library would be incredible. We’d like to get involved in that project, but too many things would have to be worked out,” Warmock told TechNewsWorld.

Ultimate Solution?

The encumbrances blocking a single set of standards — and the financial costs associated with forming a universal digital library — may be solvable, according to Sarnowski. He heads the ResCarta Foundation, a nonprofit organization established to encourage the development and adoption of a single set of open community standards for digital document warehousing.

Northern Micrographics, partially in conjunction with the foundation, promotes an open source raster format. The company offers open source tools free to download in an effort to encourage the use of a standardized data format. The strategy includes working with metadata standards and the same standards the Library of Congress uses.

“We’re fighting for the long-term preservation of data. We’re fighting to stop the loss of original data. It’s been an uphill battle for five years to convince people at large institutions to adopt our system. We’re waging a guerrilla war. We’re saying, do it this way,” said Sarnowski.

Biggest Worry

The digital divide problem may not go away. In fact, Sarnowski worries, it could become worse. “Twenty years from now, when the next generation of storage comes along, we’re going to have to move all this stuff. If you only had a handful of standards, you could run them through a converter to make the move — but that isn’t the case.”

No Comments yet »

RSS feed for comments on this post. TrackBack URI

Leave a comment

You must be logged in to post a comment.